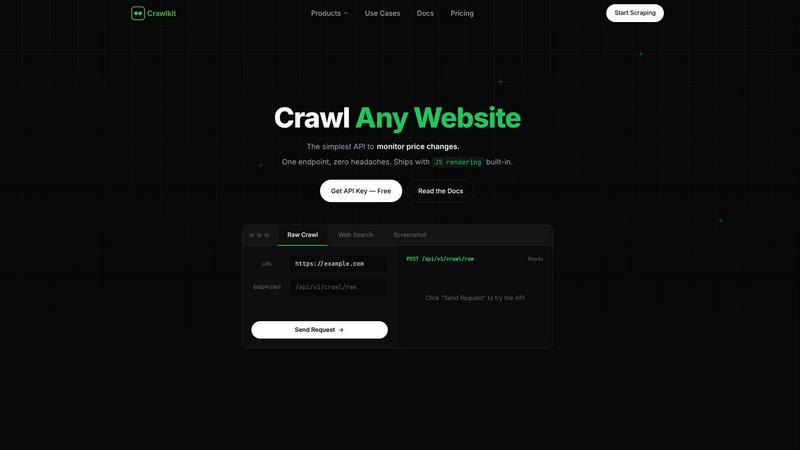

Crawlkit

Crawlkit provides a simple API to extract structured data and screenshots from any website with just one request.

Visit

About Crawlkit

Crawlkit is a powerful web data extraction platform designed specifically for developers and data teams seeking scalable and reliable access to web data. By streamlining the complexities associated with modern web scraping, Crawlkit allows users to bypass the burdens of building and maintaining intricate scraping infrastructures. Its core value lies in simplifying technical challenges, such as managing rotating proxies, executing headless browsers, and circumventing sophisticated anti-bot protections. With a single API request, users can access various data types, from raw HTML to structured results and visual snapshots from platforms like LinkedIn and Instagram. This abstraction frees developers to focus on data analysis rather than the tedious process of data collection. Trusted by leading tech companies, Crawlkit emerges as the go-to solution for building powerful data pipelines and monitoring web activities at any scale, ensuring that users have access to complete and reliable data with minimal hassle.

Features of Crawlkit

Simplified API Access

Crawlkit features a single, consistent API interface that allows users to extract structured data from multiple sources with just one request. This eliminates the need for managing different APIs and offers a unified experience, making it easier for developers to integrate data extraction into their workflows.

Automated Proxy Management

The platform automatically handles the complexities of proxy rotation and management. This feature ensures that users can access data without being blocked or slowed down by anti-bot measures, allowing for uninterrupted data collection and analysis.

Real-Time Data Validation

Crawlkit guarantees complete and accurate data by waiting for full page loads and validating responses before delivering them. This means users receive clean, structured data ready for use, significantly reducing the time spent on data cleaning and error handling.

Flexible, Credit-Based Pricing

Crawlkit operates on a transparent credit-based pricing model, where users pay only for the requests they make. With no hidden fees and the ability to purchase credits in bulk for discounted rates, this flexible pricing structure makes it accessible for businesses of all sizes.

Use Cases of Crawlkit

Lead Generation for Sales Teams

Crawlkit can automatically enrich customer relationship management (CRM) systems with data from LinkedIn. By extracting job titles, company information, and contact details, sales teams can generate high-quality leads efficiently.

Social Media Monitoring

Businesses can use Crawlkit to track competitors' Instagram growth metrics, such as follower counts and engagement rates. This information enables companies to analyze trends, adapt their strategies, and identify top-performing content over time.

App Review Analysis

Crawlkit can be utilized to pull all relevant app reviews from platforms like the App Store and Play Store. This data helps businesses understand user sentiment, identify common issues, and enhance product offerings based on user feedback.

Market Research and Competitive Intelligence

Organizations can leverage Crawlkit to gather data about competitors' offerings, pricing, and marketing strategies from various websites. This information serves as valuable insight for businesses looking to refine their strategies and stay ahead in the market.

Frequently Asked Questions

What types of data can I extract using Crawlkit?

Crawlkit allows users to extract a variety of data types, including structured data from social media platforms, app stores, and websites. This includes company profiles, user engagement metrics, and search results.

Is there a limit to how many requests I can make?

Crawlkit uses a credit-based system for requests, allowing users to make as many requests as they have credits for. There are no monthly commitments or rate limits, providing flexibility in how data can be accessed.

How does Crawlkit ensure data accuracy?

Crawlkit waits for full page loads and performs validation checks before returning data to users. This process minimizes the risk of receiving incomplete or broken data, ensuring high-quality outputs.

Can I use Crawlkit with any programming language?

Yes, Crawlkit is designed as a simple HTTP API that can be integrated into any programming language or platform. This versatility allows developers to use Crawlkit seamlessly with their existing tools and frameworks.

Explore more in this category:

Top Alternatives to Crawlkit

TrafficClaw

Talk to your SEO & Analytics data - it finally talks back

SiteMd

SiteMD instantly scans your website for SEO, speed, security, and broken link issues, delivering a plain-English health report.

Fusedash

Fusedash transforms raw data into interactive dashboards and charts for instant insights and informed decision-making.

Idearium

Idearium creates impactful websites and marketing strategies that drive growth and enhance online visibility for your.

Linkfinder AI

LinkFinder AI instantly enriches your leads with complete company details and contact information.

BlitzAPI

BlitzAPI provides clean B2B data through powerful APIs to enhance your growth team's go-to-market strategies.

echoloc

Echoloc finds sales-ready companies by analyzing hiring signals in their job postings.

FilexHost

Easily host and share any file with a simple drag and drop, getting a live URL in seconds.