kill the sub vs OpenMark AI

Side-by-side comparison to help you choose the right product.

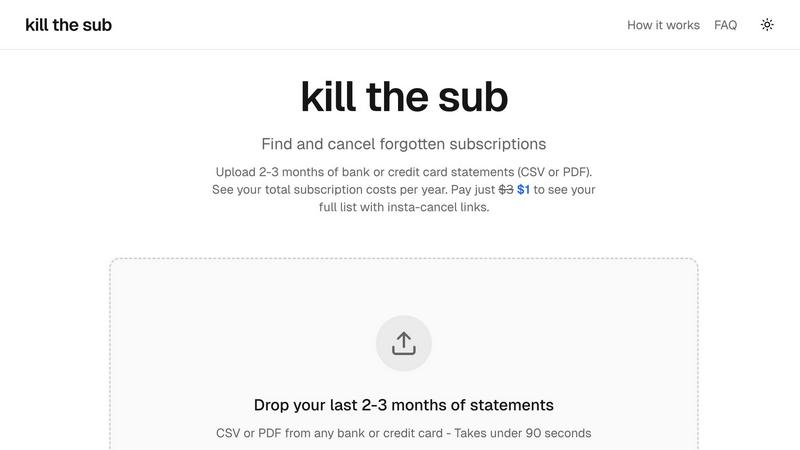

kill the sub

Easily find and cancel forgotten subscriptions in minutes with our AI tool, saving you money and hassle for just $3.

OpenMark AI benchmarks over 100 LLMs on your specific task for cost, speed, quality, and stability without requiring API keys.

Last updated: March 26, 2026

Visual Comparison

kill the sub

OpenMark AI

Overview

About kill the sub

Kill the Sub is an innovative tool designed to help individuals effortlessly manage their subscriptions and cut unnecessary expenses. In today's world, where subscriptions can easily accumulate, it's common to forget about recurring payments that drain your finances. Kill the Sub steps in to simplify this process by allowing users to upload their recent bank or credit card statements. Within just two minutes, the tool identifies all recurring charges, providing users with a comprehensive overview of their annual subscription costs. This user-friendly service empowers everyone, from busy professionals to college students, by eliminating the hassle of tracking subscriptions. With a commitment to transparency and no hidden fees, Kill the Sub guarantees that if you do not save more than three dollars, you will receive a refund. Enjoy financial peace of mind while reclaiming control over your subscriptions.

About OpenMark AI

OpenMark AI is a comprehensive, web-based platform designed for task-level benchmarking of Large Language Models (LLMs). It empowers developers, product teams, and AI practitioners to make data-driven decisions when selecting AI models for their applications. The core value proposition is moving beyond theoretical datasheets and marketing claims to evaluate models based on real performance for a specific task. Users describe their objective in plain language—such as data extraction, classification, or creative writing—and OpenMark AI executes the same prompts across a vast catalog of over 100 models in a single session. The platform provides a systematic comparison across critical dimensions: the scored quality of outputs, the actual cost per API request, latency, and crucially, the stability of results across multiple runs to reveal variance. This eliminates the guesswork and risk of relying on a single, potentially "lucky" output. By using a hosted credit system, it removes the friction of configuring and managing multiple API keys from providers like OpenAI, Anthropic, and Google, streamlining the pre-deployment validation process to ensure the chosen model is cost-efficient, reliable, and fit-for-purpose.