Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right product.

Hostim.dev

Hostim.dev simplifies Docker app deployment with built-in databases on fast, secure, EU-hosted infrastructure.

Last updated: March 1, 2026

OpenMark AI benchmarks over 100 LLMs on your specific task for cost, speed, quality, and stability without requiring API keys.

Last updated: March 26, 2026

Visual Comparison

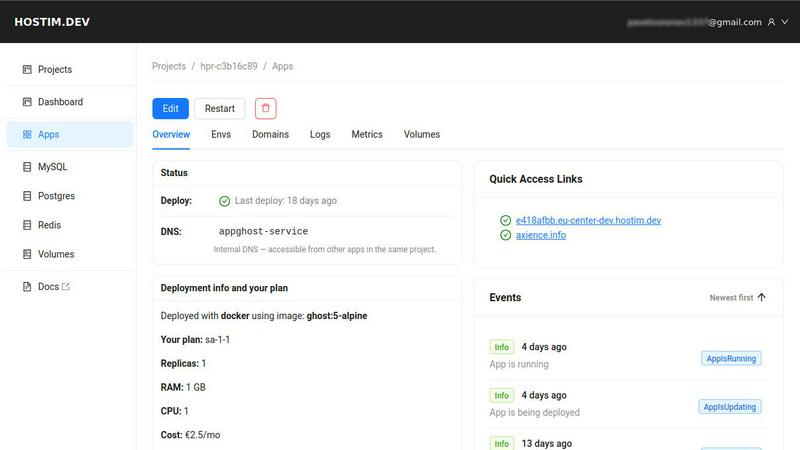

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Seamless Deployment Options

Hostim.dev allows developers to deploy applications using Docker, Git, or Docker Compose with incredible ease. Users can simply paste their Docker Compose file and go live within minutes, eliminating the need for extensive DevOps knowledge.

Managed Databases and Storage

The platform provides built-in database solutions such as MySQL, PostgreSQL, and Redis, along with persistent storage volumes. These services are provisioned instantly and come pre-wired, allowing developers to focus on building rather than managing infrastructure.

Automatic Scaling

Hostim.dev offers the ability to scale CPU and RAM resources directly through the user interface. This feature ensures zero downtime and allows developers to adjust their applications' resources in real-time based on demand.

Secure and Isolated Environments

With automatic HTTPS, live logs, and metrics, Hostim.dev prioritizes security by default. Each project is housed in its own isolated environment, providing clean separation and enhanced security for different clients or applications.

OpenMark AI

Plain Language Task Configuration

You can define the exact task you want to benchmark using simple, descriptive language without writing complex code or scripts. The platform guides you through setting up the prompt, expected output format, and evaluation criteria. This intuitive interface makes sophisticated benchmarking accessible to both technical and non-technical team members, ensuring the test accurately reflects the real-world use case you intend to build.

Multi-Model Comparative Analysis

Run your defined task against a wide selection of models simultaneously in one coordinated session. OpenMark AI manages the API calls to all providers, presenting results in a unified, side-by-side dashboard. This allows for direct comparison of performance metrics across different model families and vendors, providing a clear, holistic view of which model excels specifically for your needs, rather than generic benchmarks.

Real Cost & Performance Metrics

The platform reports actual, incurred costs from real API calls and measures true latency, giving you accurate financial and operational data for planning. More importantly, it scores output quality based on your task's criteria and runs multiple iterations to show stability and variance. This reveals not just if a model can get the task right once, but how consistently it performs and what the reliable cost-to-quality ratio is.

Hosted Benchmarking with Credits

OpenMark AI operates on a credit system, eliminating the need for users to provision, manage, and pay for separate API keys from multiple AI providers. This significantly reduces setup complexity and administrative overhead. You purchase credits from OpenMark and consume them to run benchmarks, streamlining the entire testing workflow and enabling rapid, secure experimentation without configuring external accounts.

Use Cases

Hostim.dev

For Freelancers

Freelancers can leverage Hostim.dev to quickly deploy applications for clients without the overhead of managing servers or complex configurations. The per-project billing model simplifies cost tracking and ensures clear communication with clients regarding expenses.

For Agencies

Agencies can utilize Hostim.dev to manage multiple client projects efficiently. Each project can be isolated within its own environment, allowing for streamlined operations and clear cost breakdowns, making project handovers seamless.

For Startups

Startups can benefit from the rapid deployment capabilities of Hostim.dev. With built-in databases and storage, they can focus on developing their applications without worrying about the underlying infrastructure, allowing them to bring their products to market faster.

For Students

Students can use Hostim.dev to gain hands-on experience with real infrastructure while learning Docker and application deployment. The free trial and student credits make it an accessible platform for building portfolio projects that showcase their skills.

OpenMark AI

Pre-Deployment Model Selection

Before integrating an LLM into a production feature, development teams can use OpenMark AI to empirically test candidate models on prototypes of their actual tasks. This validates which model delivers the required accuracy, tone, and format at an acceptable cost and latency, ensuring a confident, evidence-based selection that aligns with both technical and business requirements prior to shipping.

Cost Efficiency Optimization

For applications with high-volume or recurring AI usage, even small cost differences per request can have major financial implications. OpenMark AI helps identify the most cost-effective model that still meets quality thresholds. Teams can compare the real API cost against scored output quality to find the optimal balance, moving beyond just selecting the model with the cheapest listed token price.

Consistency and Reliability Validation

Testing a model's output across multiple runs is crucial for features requiring deterministic or highly reliable behavior. OpenMark AI's stability analysis shows variance in responses, helping teams avoid models that are inconsistent or prone to erratic outputs. This is essential for building user trust in AI-powered features like customer support, content moderation, or data processing.

Agent Routing and Workflow Design

When designing complex AI agent systems where different tasks are routed to specialized models, OpenMark AI is ideal for benchmarking each sub-task. Teams can determine the best model for classification, the best for summarization, and the best for creative generation within the same workflow, creating an optimized and cost-aware multi-model architecture based on empirical data.

Overview

About Hostim.dev

Hostim.dev is a modern, developer-centric Platform-as-a-Service (PaaS) that aims to streamline the deployment and management of containerized applications. Built specifically for developers, this platform eliminates the complexities of traditional infrastructure management, enabling users to deploy applications swiftly without the burdens of DevOps tasks such as server provisioning or Kubernetes orchestration. Hostim.dev allows developers to deploy from existing Docker images, Git repositories, or full Docker Compose files, often without requiring code rewrites. The platform emphasizes simplicity, transparency, and control, making it ideal for individual developers, freelancers, startups, and agencies engaged in various projects. Each project is securely isolated within its own Kubernetes namespace, ensuring clean separation for different clients or applications. With transparent pricing starting at €2.5 per month and GDPR-compliant hosting in Germany, Hostim.dev offers a reliable alternative to larger cloud providers while providing a generous 5-day free trial without requiring a credit card.

About OpenMark AI

OpenMark AI is a comprehensive, web-based platform designed for task-level benchmarking of Large Language Models (LLMs). It empowers developers, product teams, and AI practitioners to make data-driven decisions when selecting AI models for their applications. The core value proposition is moving beyond theoretical datasheets and marketing claims to evaluate models based on real performance for a specific task. Users describe their objective in plain language—such as data extraction, classification, or creative writing—and OpenMark AI executes the same prompts across a vast catalog of over 100 models in a single session. The platform provides a systematic comparison across critical dimensions: the scored quality of outputs, the actual cost per API request, latency, and crucially, the stability of results across multiple runs to reveal variance. This eliminates the guesswork and risk of relying on a single, potentially "lucky" output. By using a hosted credit system, it removes the friction of configuring and managing multiple API keys from providers like OpenAI, Anthropic, and Google, streamlining the pre-deployment validation process to ensure the chosen model is cost-efficient, reliable, and fit-for-purpose.

Frequently Asked Questions

Hostim.dev FAQ

What does the free tier include?

The free tier offers a 5-day trial period with no credit card required. During this time, users can explore the deployment features of Hostim.dev, including access to built-in databases and storage options.

Can I deploy with just a Compose file?

Yes, Hostim.dev allows you to deploy applications directly from a Docker Compose file. This feature simplifies the deployment process, enabling you to go live quickly without additional configuration.

Where is my app hosted?

All applications deployed on Hostim.dev are hosted on bare-metal servers located in Germany. This ensures GDPR compliance and provides users with a secure and reliable hosting environment.

Do I need to know Kubernetes?

No, you do not need to know Kubernetes to use Hostim.dev. The platform abstracts away the complexities of Kubernetes management, allowing developers to focus on deploying their applications without needing deep technical knowledge.

OpenMark AI FAQ

How does OpenMark AI score the quality of model outputs?

OpenMark AI uses the evaluation criteria you define when setting up your task to score outputs. This can involve automated checks for format correctness, keyword presence, or semantic similarity to a reference answer, as well as manual scoring rubrics. The platform aggregates scores across multiple runs to provide a reliable quality metric tailored to your specific success criteria.

Do I need my own API keys to use OpenMark AI?

No, you do not need to configure separate API keys from OpenAI, Anthropic, Google, or other providers. OpenMark AI operates on a hosted credit system. You purchase credits through OpenMark and use them to run benchmarks. The platform manages all the underlying API calls and costs, simplifying the process and centralizing billing.

What is the difference between a single run and testing for stability?

A single run gives you one data point, which could be an outlier or "lucky" output. Testing for stability involves running the same prompt against the same model multiple times. OpenMark AI shows the variance in cost, latency, and quality scores across these repeat runs, giving you a realistic understanding of the model's consistency and reliability in production.

What kinds of tasks can I benchmark with OpenMark AI?

You can benchmark a wide variety of tasks, including but not limited to text classification, translation, data extraction from documents, question answering, content generation, summarization, code writing, and agent-based reasoning. The platform is designed to be flexible, allowing you to describe and test virtually any prompt-based task you would send to an LLM.

Alternatives

Hostim.dev Alternatives

Hostim.dev is a modern, developer-centric Platform-as-a-Service (PaaS) that simplifies the deployment of Docker applications with built-in database support on a fast, fully EU-hosted infrastructure. As a bare-metal PaaS, it eliminates the complexities associated with infrastructure management, allowing developers to focus on building and deploying their applications without the overhead of DevOps tasks. Users often seek alternatives to Hostim.dev for various reasons, including pricing structures, specific feature sets, and differing platform needs. When choosing an alternative, it is crucial to consider factors such as deployment flexibility, ease of use, built-in services, security features, and compliance with local regulations to ensure that the platform aligns with your project requirements and provides the necessary support for your development workflow.

OpenMark AI Alternatives

OpenMark AI is a developer tool for task-level benchmarking of large language models. It allows teams to test many LLMs simultaneously on their specific use case, comparing real-world metrics like cost, latency, output quality, and stability from actual API calls, all within a browser. Users may explore alternatives for various reasons, such as budget constraints, a need for different feature sets like automated testing integration, or a preference for self-hosted solutions that offer more data control. Some may seek tools with a stronger focus on ongoing production monitoring rather than pre-deployment validation. When evaluating other options, key considerations include the scope of supported models, the depth of performance analytics, data privacy and security practices, and the overall workflow integration. The goal is to find a solution that provides actionable, trustworthy data to inform your model selection without unnecessary complexity.