diffray vs Fallom

Side-by-side comparison to help you choose the right product.

diffray

Diffray uses AI agents to catch real bugs in your code, not just nitpicks.

Last updated: February 28, 2026

Fallom is an AI observability platform for tracking and optimizing LLM and agent operations.

Last updated: February 28, 2026

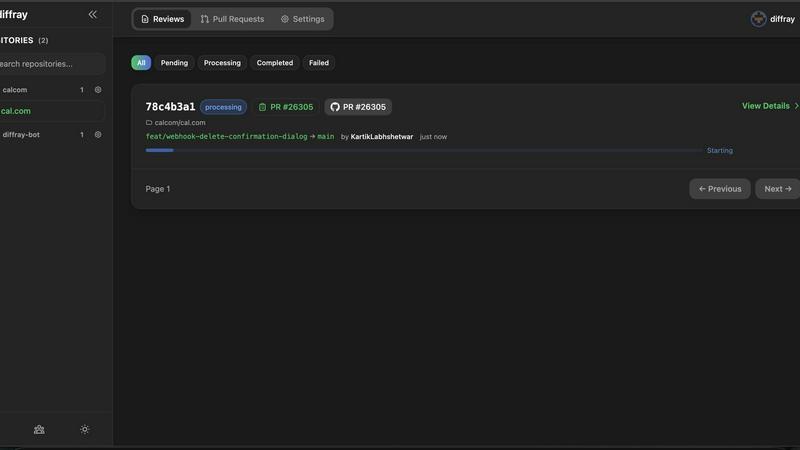

Visual Comparison

diffray

Fallom

Feature Comparison

diffray

Multi-Agent AI Architecture

Unlike single-model AI tools that provide generalized feedback, diffray employs a team of over 30 specialized AI agents. Each agent is fine-tuned for a specific domain, such as detecting security flaws like SQL injection, identifying performance anti-patterns, spotting common bug categories, or enforcing framework-specific best practices. This division of labor ensures deep, expert-level analysis across every facet of your code, leading to more accurate and comprehensive reviews than a monolithic AI could provide.

Codebase-Aware Contextual Analysis

diffray does not review code changes in isolation. It intelligently analyzes pull requests within the full context of your entire repository. This includes understanding the existing codebase architecture, historical patterns, and previous decisions. This context-awareness prevents irrelevant suggestions and ensures feedback is practical and directly applicable to your project's unique environment, significantly reducing false positives and increasing developer trust in the AI's recommendations.

Actionable and Precise Feedback

The platform is designed to eliminate noise. By leveraging its multi-agent system and contextual understanding, diffray generates concise, highly actionable feedback that developers can immediately use to improve their code. Instead of vague warnings, it provides specific, justified recommendations with clear explanations, often including code snippets or direct links to problematic lines. This precision transforms AI review from a curiosity into a reliable, time-saving partner in the development workflow.

Seamless Platform Integration

diffray is built for easy adoption within existing developer workflows. It integrates directly and seamlessly with popular Git hosting services including GitHub, GitLab, and Bitbucket. The setup process is straightforward, allowing teams to connect their repositories and start receiving intelligent code reviews within minutes, without disrupting their current tools or processes. This frictionless integration is key to enabling widespread team usage and realizing the platform's productivity benefits.

Fallom

End-to-End LLM Tracing

Fallom provides comprehensive tracing for every LLM call, capturing granular details in real-time. This includes the full prompt, model output, any function or tool calls made, token counts, latency metrics, and the calculated cost per call. This deep visibility is essential for debugging complex agentic workflows, understanding performance bottlenecks, and gaining a precise view of operational costs.

Cost Attribution and Transparency

The platform offers detailed cost tracking and attribution, breaking down spend by model, team, user, or customer. This provides full financial transparency for budgeting, forecasting, and internal chargeback processes. Teams can monitor live usage, set alerts for budget overruns, and make informed decisions about model selection based on both performance and cost-efficiency.

Compliance-Ready Audit Trails

Fallom is built for regulated industries, providing immutable, complete audit trails of every AI interaction. This includes full input/output logging, model versioning, and user consent tracking. These features are designed to help organizations meet stringent regulatory requirements such as the EU AI Act, SOC 2, and GDPR, ensuring accountability and traceability in AI operations.

Session Tracking and User Context

Group individual traces into complete user sessions to understand the full customer journey. This feature provides context for interactions, allowing teams to analyze how users engage with AI features, troubleshoot specific customer issues, and calculate the total cost-to-serve per user or account, enabling better product and support insights.

Use Cases

diffray

Accelerating Pull Request Reviews for Engineering Teams

Development teams can integrate diffray to automatically review every pull request. The AI agents act as a first-pass reviewer, catching common bugs, security issues, and style violations before human reviewers engage. This reduces the cognitive load on senior developers, shortens review cycles from an average of 45 minutes to just 12 minutes per week, and allows teams to merge high-quality code faster, accelerating overall development velocity and release cycles.

Enhancing Code Security and Compliance

Security teams and developers responsible for maintaining secure codebases use diffray as a proactive security guardrail. The dedicated security agents continuously scan code changes for vulnerabilities like hard-coded secrets, injection flaws, and insecure dependencies within the context of the application. This provides an automated, consistent layer of security review that helps prevent critical issues from being introduced into production, aiding in compliance with security standards.

Onboarding and Mentoring Junior Developers

diffray serves as an always-available mentor for junior developers or engineers new to a codebase. By providing instant, contextual feedback on best practices, architectural patterns, and project-specific conventions, it helps them learn and adhere to team standards more quickly. This reduces the review burden on senior team members while improving the quality and consistency of code contributed by less experienced developers, fostering better skill development.

Maintaining Code Quality in Legacy Systems

Teams working with large or legacy codebases use diffray to manage technical debt and ensure new changes don't degrade system stability. The codebase-aware analysis understands the existing (potentially complex) architecture, allowing diffray to suggest improvements that are compatible with the current system while gently guiding refactoring efforts. It helps prevent the introduction of new anti-patterns and performance bottlenecks into fragile systems.

Fallom

Production Debugging and Performance Optimization

Engineering teams use Fallom to rapidly diagnose failures and latency issues in live AI applications. By examining timing waterfalls and tool call sequences, developers can pinpoint exactly where in a multi-step agent workflow a problem occurred, whether it's a slow LLM call, a failing tool, or a logic error, drastically reducing mean time to resolution (MTTR).

Financial Governance and Cost Control

Finance and engineering leadership utilize Fallom's cost attribution features to monitor and control AI expenditure. By tracking spend per model, team, or product feature, organizations can identify cost drivers, optimize expensive workflows, implement chargebacks, and ensure AI initiatives remain within budget, transforming AI costs from a black box into a manageable line item.

Regulatory Compliance and Auditing

Compliance and legal teams leverage Fallom to demonstrate adherence to AI regulations. The platform's immutable audit trails, consent tracking, and detailed logging provide the necessary evidence for audits required by standards like the EU AI Act or SOC 2. Privacy mode features also allow sensitive data to be redacted while maintaining operational telemetry.

Model Evaluation and A/B Testing

Product and ML teams employ Fallom to run evaluations, test new prompts, and safely roll out new model versions. The platform facilitates A/B testing by splitting traffic between models or prompt versions, allowing teams to compare performance, cost, and quality metrics like accuracy or hallucination rates before committing to a full production deployment.

Overview

About diffray

diffray is a next-generation, AI-powered code review platform engineered to solve the core frustrations of modern development teams: noisy, ineffective feedback and missed critical issues. It moves beyond the limitations of traditional single-model AI tools by implementing a sophisticated multi-agent architecture. This system deploys over 30 specialized AI agents, each an expert in a distinct domain such as security vulnerabilities, performance bottlenecks, bug patterns, coding best practices, and even SEO considerations for relevant code. This targeted, domain-specific approach allows diffray to conduct deep, contextual investigations into code changes, replacing generic and speculative suggestions with highly precise, actionable feedback. Crucially, diffray is codebase-aware. It analyzes pull requests and commits within the full context of your repository's existing code, architecture, and historical decisions, ensuring recommendations are relevant and practical. The primary value proposition is a dramatic increase in developer productivity and code quality. diffray aims to reduce the average PR review time from 45 minutes to just 12 minutes per week by filtering out noise and providing trustworthy insights, allowing developers to focus on logic and innovation. It is built for development teams of all sizes who are serious about improving their code security, performance, and maintainability without sacrificing velocity. The platform integrates seamlessly with GitHub, GitLab, and Bitbucket.

About Fallom

Fallom is an AI-native observability platform engineered specifically for monitoring and optimizing Large Language Model (LLM) and AI agent workloads in production environments. It provides engineering, product, and compliance teams with comprehensive, real-time visibility into every AI interaction, moving organizations from blind deployment to data-driven management of their AI applications. The platform's core value proposition is delivering end-to-end tracing for LLM calls, capturing granular details such as prompts, outputs, tool calls, token usage, latency, and per-call costs.

Built on the open standard OpenTelemetry (OTEL), Fallom offers a single, lightweight SDK that allows teams to instrument their applications in minutes, eliminating vendor lock-in. It is designed for enterprises that require scale, reliability, and compliance, featuring session-level context for user journeys, timing waterfalls for complex multi-step agents, and robust audit trails. By centralizing observability, Fallom empowers teams to debug issues faster, monitor usage live, attribute spend accurately across models and teams, and ensure their AI systems are performant, cost-effective, and compliant with regulations like the EU AI Act, SOC 2, and GDPR.

Frequently Asked Questions

diffray FAQ

How does diffray differ from other AI coding assistants?

diffray is fundamentally different due to its multi-agent, codebase-aware architecture. While most AI assistants use a single general-purpose model to comment on code, diffray deploys a team of over 30 specialized agents, each an expert in a specific domain like security or performance. Furthermore, it reviews code within the full context of your repository, not just the changed lines. This results in far more accurate, relevant, and actionable feedback with significantly fewer false positives.

What Git platforms does diffray integrate with?

diffray offers seamless, native integrations with the three major Git hosting platforms: GitHub, GitLab, and Bitbucket. You can connect your repositories from any of these services directly to diffray. The integration typically involves installing a diffray app or bot into your organization/workspace, which then automatically reviews pull requests or specific branches as configured, posting feedback directly into the PR thread.

Is there a free plan available?

Yes, diffray offers a free plan specifically designed to support open-source projects. This allows maintainers of public repositories to leverage the platform's advanced code review capabilities at no cost. For private repositories used by teams and companies, diffray provides a straightforward trial process so you can evaluate its effectiveness within your private codebase before committing to a paid subscription.

How does diffray handle the privacy and security of my code?

Security and privacy are paramount. diffray is designed with enterprise-grade security practices. When analyzing your code, it processes the data securely and does not use your proprietary code to train its underlying AI models. You retain full ownership of your code. It is recommended to review diffray's specific security whitepaper and privacy policy for detailed information on data handling, encryption, and compliance standards.

Fallom FAQ

How does Fallom integrate with my existing AI applications?

Fallom integrates via a single, lightweight OpenTelemetry (OTEL)-native SDK. You can instrument your applications in under five minutes by adding the SDK, which automatically captures traces from LLM calls, tool usage, and custom spans. Being OTEL-based, it avoids vendor lock-in and works with a wide range of LLM providers and frameworks.

Does Fallom store sensitive user data from prompts and responses?

Fallom offers a configurable Privacy Mode to address this concern. You can choose to disable full content capture for sensitive data, redact specific fields, or log only metadata (like token counts and latency) while protecting confidential information. This allows you to maintain full observability for debugging while adhering to data privacy policies.

Can Fallom track costs for different teams or projects?

Yes, detailed cost attribution is a core feature. Fallom automatically breaks down costs by the LLM model used. You can further enrich traces with custom attributes (like team ID, project name, or user ID) to slice and dice spending across any dimension, enabling precise showback/chargeback and helping teams understand their AI resource consumption.

Is Fallom suitable for large-scale enterprise deployments?

Absolutely. Fallom is engineered for enterprise-scale, reliability, and security. It handles high-volume tracing data, offers robust access controls, and provides features essential for large organizations, including comprehensive audit trails, SOC 2/GDPR-ready compliance tools, and the ability to monitor complex, multi-agent AI systems across entire product suites.

Alternatives

diffray Alternatives

diffray is a next-generation AI-powered code review platform in the development tools category. It uses a multi-agent architecture to provide deep, contextual analysis of code changes, focusing on catching real bugs and security issues rather than generating generic nitpicks. Users may explore alternatives for various reasons, such as budget constraints, specific feature requirements not covered by a particular tool, or the need for integration with a different development platform or version control system. The search for the right tool is often driven by the unique workflow and technical stack of a team. When evaluating alternatives, key considerations include the depth and accuracy of the analysis, the tool's ability to understand your full codebase context, integration capabilities with your existing development environment, and the overall value in terms of reducing review time and improving code quality. The goal is to find a solution that effectively balances powerful automation with actionable, relevant feedback for your developers.

Fallom Alternatives

Fallom is an AI-native observability platform designed for monitoring and optimizing Large Language Model (LLM) and AI agent operations in production. It falls into the category of specialized development tools for AI application management, providing end-to-end tracing, cost analysis, and compliance features. Users may explore alternatives to Fallom for various reasons, including budget constraints, specific feature requirements not covered, or a need for a platform that integrates more tightly with their existing tech stack. Different organizations have unique priorities, such as open-source flexibility, different pricing models, or specialized support for certain cloud providers or agent frameworks. When evaluating an alternative, key considerations should include the depth of LLM and agent tracing capabilities, support for compliance and audit trails, ease of integration and vendor lock-in, scalability for enterprise workloads, and the overall total cost of ownership. The goal is to find a solution that delivers the necessary visibility and control for your specific AI deployment.